Hi everyone,

I am Daniel and I am starting to work on the Interval Timer feature for modeling object behaviors. Before diving into the code, I wanted to share my understanding of the issue and get some feedback to make sure I am on the right track.

After reading the documentation and exploring the codebase, here is my current understanding:

What the interval timer does?

The timer works like a clock that marks the time since the last significant event. Instead of continuous time, it uses discrete “time cells”. Each tick represents an interval, and when a significant event happens, the timer resets to tick 0.

The main purpose is to improve the learning process on dynamic behaviors

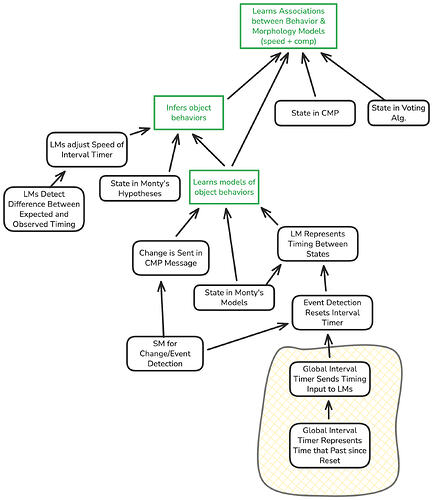

How it integrates with the architecture?

The timer maps to the cortical column layers like this:

L1 receives the current tick from the timer (broadcast to all LMs)

L4 receives features from the Sensor Module (already implemented)

L5b stores the current state in the sequence (new, advances when timer resets)

L6a stores spatial location (already implemented)

The timer would be a global component in MontyBase that broadcasts its state to all Learning Modules through the existing step flow.

Main components to implement

- IntervalTimer class with methods like get_current_tick(), reset(), step(), and set_speed()

- Modifications to the State class to include tick information

- Buffer updates to store temporal information with observations

- Hypothesis expansion from (location, rotation) to (location, rotation, state)

- Object model changes to associate features with both location and temporal state

Questions I have

What defines a “significant event” that resets the timer? Should it be based on feature changes detected by the SM, state changes detected by the LM, or something else?

How many time cells should the timer have by default? The documentation shows 12 as an example, but is this configurable per experiment?

When the timer reaches the maximum tick without a reset, should it stay at the last tick or do something else?

Proposed aproach

Start with a simple IntervalTimer class with configurable num_time_cells (default 12)

Use logarithmic resolution so short intervals have more precision than long ones (as suggested in the documentation)

Add tick to non_morphological_features in State to minimize changes to the existing structure

Make the timer optional in MontyBase so existing experiments keep working

Broadcast the timer to all LMs including static morphology models, but static LMs will simply ignore the temporal information

Use the existing FeatureChangeFilter pattern as inspiration for detecting significant events

I am open to discussing any of these points. If there are many things to clarify, I am also available for a meeting to talk through the details.

Looking forward to your feedback!